Zombie VMs comprise half the public cloud, which enterprise IT needs to embrace — that’s where the best new stuff is being built.

Microsoft wants you to believe that Amazon Web Services is “a bridge to nowhere,” but nothing could be further from the truth. In fact, as Gartner says, “New stuff [workloads] tends to go to the public cloud … and new stuff is simply growing faster” than the traditional workloads that currently feed the data center.

Most of that “new stuff” is heading for AWS, although Microsoft Azure is an increasingly credible play.

In fact, both reflect the reality that the future belongs to the public cloud. This is in part a matter of price, as Actuate’s Bernard Golden posits, but it’s mostly a matter of flexibility and convenience. While the convenience may lead to plenty of waste in the form of unused VMs, it’s a necessary evil on the road to building the future.

Public cloud: Big and getting bigger

The number at which analysts now peg the value of Amazon Web Services has reached a whopping $50 billion. That’s an amazing figure, and it’s buttressed by an estimate that AWS will generate $20 billion in annual revenue by 2020, up from roughly $5 billion in 2014.

We’ve had doubters hate on such prognostications before, and they’ve been wrong — every single time.

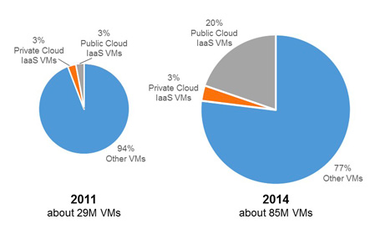

Clearly, there’s an industrywide, tectonic shift toward the scale and convenience of public cloud computing, as Gartner analyst Thomas Bittman’s research shows.

According to Gartner, the number of VMs running in the public cloud tripled from 2011 to 2014.

What’s clear from these charts is that, overall, the number of active VMs has tripled, as has the number of private cloud VMs — not bad.

But much more impressive is the groundswell for VMs running in the public cloud. As Bittman highlights, “The number of active VMs in the public cloud has increased by a factor of twenty. Public cloud IaaS now accounts for about 20 percent of all VMs – and there are now roughly six times more active VMs in the public cloud than in on-premises private clouds.”

In other words, the private cloud is growing at a reasonable clip, but the public cloud is growing at a torrid pace.

A false number?

Of course, a significant chunk of that public cloud growth is vapor. As Bittman notes, “Lifecycle management and governance for VMs in the public cloud are not nearly as rigorous as management and governance in on-premises private clouds,” leading to 30 to 50 percent of public cloud VMs being “zombies,” or VMs that are paid for but not used.

That number may be generous. In my own conversations with a variety of enterprises large and small, I’ve seen VM waste as high as 80 percent.

Not that this will be much of a surprise to data center pros. According to McKinsey estimates, data center utilization stands at a sorry 6 percent. While Gartner gives hope — estimating utilization at 12 percent — this still speaks of terrible inefficiencies in hardware use.

In other words, there’s always a fair amount of waste in IT, whether it’s running in public or private clouds or in traditional data centers. Yes, there are tools like Cloudyn to help track actual cloud usage. Even AWS, which theoretically stands to lose revenue if customers turn off 30 to 50 percent of unused capacity, has its CloudWatch monitoring service to help its customers avoid waste. But that isn’t really the point.

Inventing the future

The reality is that public cloud has exploded in popularity because it’s helping enterprises transform their businesses. The very convenience that makes it so easy for developers to spin up new server instances leads to the likelihood of forgetting they’re running when the next project comes along.

This is a strength, not a weakness, of the public cloud. As Matt Wood, AWS head of data science, told me in an interview recently:

Those that go out and buy expensive infrastructure find that the problem scope and domain shift really quickly. By the time they get around to answering the original question, the business has moved on. You need an environment that is flexible and allows you to quickly respond to changing big data requirements. Your resource mix is continually evolving; if you buy infrastructure it’s almost immediately irrelevant to your business because it’s frozen in time. It’s solving a problem you may not have or care about any more.

Sure, it would be more cost-effective to shut down unused VMs. But in the rush to invent the future, it can be expensive to make the bother. Back to Bittman, who characterizes public vs. private cloud workloads as follows:

Public cloud VMs are much more likely to be used for horizontally scalable, cloud-friendly, short-term instances, while private cloud tends to have much more vertically scalable, traditional, long-term instances. There are certainly examples of new cloud-friendly instances in private clouds, and examples of traditional workloads migrated to public cloud IaaS, but those aren’t the norm. New stuff tends to go to the public cloud, while doing old stuff in new ways tends to go to private clouds.

Pay attention to that last line, because it’s the clearest indication why every company needs to invest heavily in the public cloud, and why private cloud feels to me like a short-term stopgap. Yes, there may be workloads that today feel inappropriate for the public cloud. But they won’t last.

Via: infoworld

Leave a Reply