Apple’s two solar farms and one fuel cell farm near its data center in North Carolina are now all live and generating power. The projects are unprecedented in the industry and have helped usher in real change.

Check out this special report.

——-

Last week a utility in North Carolina announced something seemingly mundane on the surface, but it was a transcendent moment for those that have been following the clean energy sector. Duke Energy, which generates the bulk of its energy in the state from dirty and aging coal and nuclear plants, officially asked the state’s regulators if it could sell clean power (from new sources like solar and wind farms) to large energy customers that were willing to buy it — and yes, shockingly enough, thanks to restrictive regulations and an electricity industry that moves at a glacial pace, this previously wasn’t allowed.

For years Duke Energy largely ignored clean energy in North Carolina (with a few exceptions), mostly with the explanation that customers wouldn’t pay a premium for it. But turns out when those large energy customers are internet companies — with global influential consumer brands, huge data centers that suck up lots of energy and large margins that given them leeway to experiment — they can be pretty persuasive.

Moments after the utility’s filing hit the public record on Friday, Google, which has been publicly working with Duke Energy since the spring of this year on the clean energy buying project and has a large energy-consuming data center in Lenoir, North Carolina, published a blog post celebrating the utility’s move. Google has spent over a billion dollars — through both equity investments and power buying contracts — on clean energy projects over the years, and has been a very public face of the movement to “green” the internet.

Apple’s solar farm next to its data center in Maiden, North Carolina

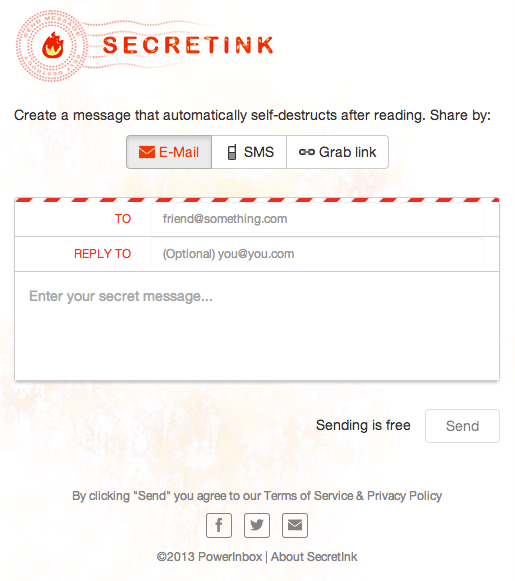

But absent from a lot of the public dialogue has been the one company that arguably has had a greater effect on bringing clean power to the state of North Carolina than any other: Apple. While the state’s utility has just now become more willing to supply clean energy to corporate customers, several years ago Apple took the stance that if clean power wasn’t going to be available from the local utility for its huge data center in Maiden, North Carolina, it would, quite simply, build its own.

In an unprecedented move — and one that hasn’t yet been repeated by other companies — Apple spent millions of dollars building two massive solar panel farms and a large fuel cell farm near its data center. These projects and are now fully operational and similar facilities (owned by utilities) have cost in a range of $150 million to $200 million to build. Apple’s are the largest privately-owned clean energy facilities in the U.S. and more importantly, they represent an entirely new way for an internet company to source and think about power.

Apple has long been reticent about speaking to the media about its operations, green or otherwise. But I’ve pieced together a much more detailed picture of its clean energy operations after talking to dozens of people, many of them over the years. And Last week I got a chance to see these fully operational facilities for myself.

I walked around these pioneering landscapes, took these exclusive photos, and pondered why Apple made this move and why it’s important. This is Apple’s story of clean power plans, told comprehensively for the first time.

Apple as a power pioneer

When Duke Energy’s news hit the wires on Friday, I was flying back from a day of driving around Apple’s solar farms and fuel cell farm. In the summer of 2012, I took the same drive around North Carolina, visiting not just Apple’s data center, but also Facebook’s, and Google’s, all of which are within an hour or two drive from each other. The internet companies built their data centers in this North Carolina corridor to serve East Coast traffic, and because (among many reasons) power is cheap and readily available. Historically, however, it has been pretty dirty.

Back in the summer of 2012 Apple had already surprised the world by starting construction on its first solar farm. During my road trip then, the plot of land across the street from Apple’s data center had been cleared and poles that would eventually hold solar panels were being installed. A sign in front of the entrance to the plot of land read “Dolphin Solar,” a name that Apple adopted to keep the project under wraps. Apple has long taken a secretive approach to building its clean energy projects, which hasn’t necessarily been all that beneficial for its public image around the issue.

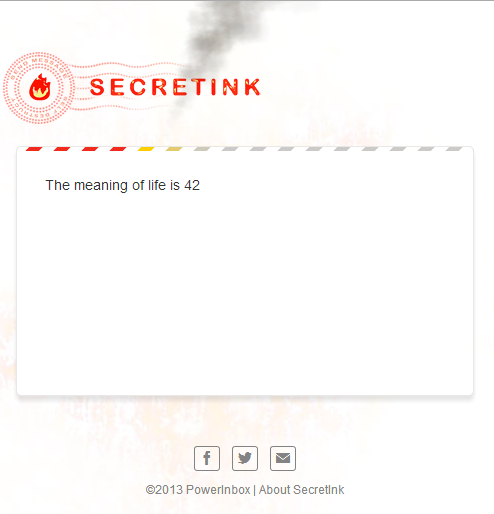

But now all of Apple’s clean power farms are fully constructed, connected to the grid, generating clean power, and — as I was happy to see — very visible from public areas. The 100-acre, 20 megawatt (MW) solar farm across the street from Apple’s data center was finished in 2012, and even cars on the highway next to the huge area of land can’t help but catch sporadic glimpses of panels when quickly driving by.

Adjacent to Apple’s data center is a 10 MW fuel cell farm, which uses fuel cells from Silicon Valley company Bloom Energy and which has been providing energy since earlier this year. Fuel cells are devices that use a chemical reaction to create electricity from a fuel like natural gas (or in Apple’s case biogas) and oxygen.

Apple’s second 20 MW solar panel farm, which is about 15 miles away from the data center near the town of Conover, North Carolina, is also up and running. All told, the three facilities are creating 50 MW of power, which is about 10 MW more than what Apple’s data center uses. Because of state laws, the energy is being pumped into the power grid, and Apple then uses the energy it needs from the grid. But this setup also means Apple doesn’t need large batteries, or other forms of energy storage, to keep the power going when the sun goes down and its solar panels stop producing electricity.

The solar farms

Apple’s solar farm next to its data center in Maiden, North Carolina

Apple’s solar panel farms were built and are operated by Bay Area company SunPower. SunPower manufacturers high-efficient solar panels, solar panel trackers and also develops solar panel projects like Apple’s. The solar farm across from the data center has over 50,000 panels on 100 acres, and it took about a year to build the entire thing.

Each solar panel on Apple’s farms has a microcontroller on its back, and the panels are attached to long, large trackers (the steel poles in the picture). During the day, the computers automatically and gradually tilt the solar panels so that the face of the panels follow the sun throughout the day. The above picture was taken in the late morning, so by the end of the day, the panels will have completely rotated to face where I was standing. The trackers used are single-axis trackers, which basically means they are less complex and less expensive than more precise dual-axis trackers.

North Carolina isn’t exactly the sunniest place. During my visit it was quite cloudy, as you can see in the pictures. During the winter months — from October to February — when the sun is less bright in the sky, Apple’s solar farms are no doubt generating less energy than they are during the peak summer months.

Apple’s solar power farm stretches for 100 acres

You can see in the above picture that the grass is neatly maintained. Apple manages the grass under the panels in a variety of ways, but one of those is a little more unusual. Apple works with a company that ropes in sheep that eat the grass on a portion of the solar farm; when the sheep finish grazing on one spot, they’re moved to the next.

It’s a more sustainable option than running gas-powered mowers across the farm, and also has the added benefit that sheep can get into smaller spaces and up close to the panels. Some companies use goats to eat grass on plots of land, but goats could chew on the farm’s wiring and solar panel parts.

Close up shot of the panels at Apple’s solar farm

Apple’s second 20-MW solar farm is a 15-minute or so drive away from the data center, past a Big Kmart and the Catawba Valley Rifle and Pistol Club. The second solar farm is less discussed, perhaps because it’s nestled behind a neighborhood and well camouflaged by landscaping from the road. Apple built both of its solar farms with large berms around them, and the rows of panels themselves are mostly nestled down below sight level.

Apple’s second solar farm about 15 miles from its data center in North Carolina

Since the second solar farm is a ways away from the data center, it’s also an example of why Apple’s business with the utility is important. The power goes into the power grid near the solar farm, and Apple can use the equivalent back at its data center.

Apple’s second solar farm in Conover, North Carolina

Apple is building another 20 MW solar panel farm next to its data center in Reno, Nevada, and has said it’s working closely with Nevada utility NV Energy on that one. Apple is one of the first companies to take advantage of a new green tariff approved by Nevada’s utility commission that will enable Apple to pay for the cost of building the solar panel farm. Once again in Reno, Apple is working with SunPower for the solar farm, but this time it is using a different kind of solar technology that increases the amount of power generated (combo of panels and mirrors to concentrate the sun light).

The fuel cells farm

Compared to the massive acreage that Apple’s solar panels cover, the fuel cell farm looks quaint. You can walk around it in a couple minutes. In the grand scheme of power generation, fuel cells are weird in that nothing is burned in a fuel cell, in contrast to the combustion that occurs in natural gas and coal plants, or traditional cars that run on fuel. Instead of combustion, fuel cells use a chemical reaction, almost like a battery, to produce electricity.

Apple’s fuel cells were manufactured and are operated by Bloom Energy, a Sunnyvale, Calif.-based company that has raised over a billion dollars in venture capital funding from VCs like Kleiner Perkins, and NEA. The boxes suck up a fuel, usually natural gas (but in Apple’s case biogas), combine it with oxygen, run it over plates lined with a catalyst, and through a complex reaction create electricity on site.

Close up of Apple’s fuel cells, made by Bloom Energy

There are, according to my back of the envelope count, about 50 Bloom Energy boxes at Apple’s fuel cell farm. In total the farm produces 10 MW of energy, and each fuel cell produces 200 KW. Apple originally was planning to install 24 fuel cells (for 4.8 MW), but later decided to double the size of the facility.

When Bloom Energy first publicly launched its fuel cells in 2010 they cost between $700,000 to $800,000 each, though the price probably has come down since then. Bloom also sells fuel cell energy contracts, where the customer doesn’t pay for the installation, but pays for the energy over a several year period of time.

Upclose rows of Apple’s fuel cells, made by Bloom Energy

An interesting thing to note: when I was observing the fuel cells operate, I noticed that they produce a lot of heat. You can actually see heat waves rising from the top of the fuel cells. The fuel cells also produce a noise from fans that hum to keep the fuel cells cool. I don’t think I quite captured the heat waves rising from the top in the photo, but took this little video clip so you could hear the noise of the fans.

When I was walking around the outside of the fuel cell facility I could also see a couple of people doing maintenance work on some of the fuel cells. I’m not sure what they were doing exactly, but fuel cells need some level of maintenance to keep them provided with the fuel, as well as to replace moving parts like fans. Every few years they also need to have a key part replaced called the stack, which can lead to expensive maintenance costs for the fuel cell operator.

Apple’s fuel cell farm next to its data center in Maiden, North Carolina

Apple opted to have its fuel cells powered with biogas instead of natural gas. Biogas is methane gas that can be captured from decomposing organic matter, so like from waste at landfills, animal waste on farms, and water treatment facilities. Biogas can be used in place of natural gas as a cleaner fuel to run buses, cars and trucks, or to run fuel cells. Biogas has the benefit of being cleaner, given natural gas is a fossil fuel.

But the problem with biogas is that it’s notoriously difficult to economically source in large amounts and pipe to places like the Apple data center. Biogas is also more expensive than natural gas, which likely added even more onto the cost of Apple’s clean power facilities.

Apple’s fuel cell farm in Maiden, it has a total output of 10 MW

An unconventional move

Apple’s solar farms at one point were controversial both outside of Apple and likely inside, too. The solar farms use a large amount of land, which had to be raised and prepared for the panels. Back in 2011, when Apple was clearing the land, some local residents complained about burning foliage and smoke blowing toward their houses.

Buying clean power from a utility that had offered it would have no doubt been a considerably cheaper option — but in 2010 and 2011 that wasn’t available. It’s still not even officially available now, and is pending the state regulator’s approval of Duke Energy’s pilot project.

But the cost of the clean power installations to Apple’s data center project in North Carolina were probably substantial. A 20 MW solar panel farm could cost around $100 million to build back in 2010 and 2011, though the cost could be less now that the price of solar panels have dropped in recent years. Apple could also have negotiated a lower price since it’s such a high profile company. Apple’s entire data center project in North Carolina was billed as a billion-dollar data center when it was announced years ago.

It’s also a controversial move for an internet company to get into the energy generation business. But as more and more megascale data centers are being built, and more web services are being moved to the cloud, internet companies are spreading out their investments and innovations from inside the data centers on the server level to outside the data center, down to the energy level. For example, last week Amazon said that it has been building its own electric substations and even has firmware engineers rewrite the archaic code that normally runs on the switchgear designed to control the flow of power to electricity infrastructure.

But more efficient energy infrastructure is one thing, and clean power — not an obvious economic advantage — is another. Apple’s peers — from Facebook and Google — have not (yet) followed in Apple’s footsteps when it comes to building their own clean power plants. Microsoft and eBay have been experimenting with clean power for their data centers but on a much smaller scale.

I’ve asked Google and Facebook execs multiple times over the years if they plan to build their own clean energy generation, and many times they’ve said that while they haven’t ruled it out, they aren’t yet publicly planning anything. I know that Google has discussed this issue internally at length, and I’ve heard has even gone so far as to hire the former Director of the Department of Energy’s ARPA-E program, Arun Majumdar, to in part help look into this issue. But Google hasn’t announced any plans.

Of course, Google’s clean energy investments have definitely had an impact. The company has put more than a billion dollars into a Hoover’s Dam worth of clean energy projects, mostly wind farms and solar farms, over a several year period. The bulk of its investments have been through investing equity in a wind or solar farm, and also making a contract with a utility to buy clean power from a project for a nearby data center.

But Apple’s move was so unusual: it was an aggressive move into an entirely new area for Apple, it was pretty secretive (although that’s standard-operating procedure at Apple), and it cost more money than the standard approach. And Apple plans to continue this method, allowing it to use what it has learned for future projects.

In the world of clean energy there are a lot of ways that companies can pay to green their operations — many buy renewable energy credits that offset consumption of fossil fuel based energy. But building solar farms and a fuel cell farm next to a data center could be the surest way to add clean power in a way that can be validated and seen by the public. It seems like Apple execs thought if they were going to commit to the whole idea of clean energy, it was going to be all the way.

The effects of the clean energy projects on Apple’s brand also can’t be discounted. Apple has a powerful and potentially fragile consumer brand, and the data center in North Carolina was a major push for Apple to move more heavily into cloud services. A record for the largest privately-owned solar farm in the U.S., could add significant branding capital to a brand trying to stay on top.

The effect of Apple’s clean energy move

I hope that this series of photos I took shows the extent to which Apple has gone to create its own clean power sources in a state that at one time wasn’t offering it any other options. And while Apple doesn’t publicly comment on its moves, its actions have no doubt had a strong effect on Duke Energy, on its internet peers, and on North Carolina’s clean power options. Many conversations I’ve had with execs over the past few years have confirmed this.

Google has publicly been working with Duke Energy all year on the recently announced clean power buying plan, but Apple’s decision to move forward with its own facilities — with or without the utility — likely provided a key leverage point. What’s more influential and powerful: friendly encouragement, or a fear that you’re going to be bypassed and lose out on opportunities?

The reality is that data centers, and the internet companies that are building them, are becoming major power users. Some 2 percent of the total electricity in the U.S. as of 2010 was consumed by data centers and this consumption will only grow as more web services are put in the cloud. Most data centers are largely run off of power grids supplied by coal and natural gas plants.

But a handful of these leading internet companies like Apple, Google, Facebook and others have been investing — both money and time — into ways to find and create more clean power for their data centers. The past five years have been a time of transition for these companies, as they move clean power up the list in importance for their data centers. Anpther example of how far they’ve come is that last week Facebook announced that it’s building a data center in Iowa that will be fully powered by wind energy.

The times, they are a-changing. Of course not every data center operator is able to pay a premium to buy clean power, and very few are willing to invest even more in building their own clean power projects. But eventually clean power like huge wind farms won’t be a premium — they aren’t now in some places like Iowa. Google, which has invested in a variety of wind farms, has seen the costs of its contracts drop over the years.

Down the road more companies that use colocation data center services will want clean power options, too. Last week at an event organized by Greenpeace (and moderated by me), Box and Rackspace talked about some of the options they had for clean power. Greenpeace, and many in the industry, are hoping that Amazon and its dominating AWS will start to adopt more clean power down the road.

Change often times happens incrementally. From the outside that happened with clean power and Internet companies in North Carolina. But sometimes crucial change happens with a single brush stroke or a single outlier decision. That’s how I see Apple’s clean power facilities in North Carolina — right now, they stand alone.

Via: gigaom