The number of products having perfect scores is up in AV-TEST’s latest test for virus protection for Windows 7, and Microsoft is still at the bottom.

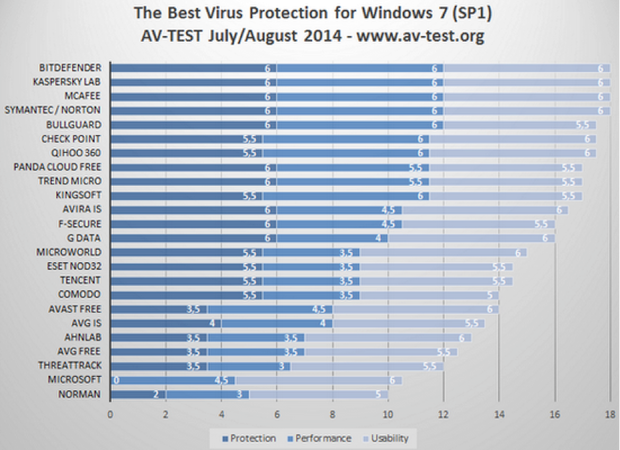

AV-TEST Institute’s August 2014 (Windows 7 SP1) test results

Image: AV-TEST

Back in the day, a person could raise the hood, and get the car running again. That is no longer the case. Trying to decide whether an antivirus application is working or not is not all that different. There was a time when feeding an EICAR file to the antivirus program was enough of a test. In today’s complex digital world, using EICAR to decide the value of an antivirus application is not enough. Like today’s vehicles, antivirus software needs testing by experts.

Carrying the analogy further, one hopes to find a great mechanic who charges reasonable rates. Hoping to find an unbiased, independent test lab to decide which AV protection solution best fits the company’s needs is also important. AV-TEST Institute is one test lab gaining that kind of reputation and is affordable — providing detailed free test reports on most antivirus products now marketed.

AV-TEST Institute’s August 2014 (Windows 7 SP1) test results are now available. The company reports on 33 antivirus applications: 24 consumer-oriented antivirus programs and 9 corporate-endpoint protection packages. This article focuses on the corporate antivirus test results, the consumer antivirus test results are posted here.

Here’s more about AV-TEST and how the company works.

In-house testing software

To get accurate test results, the developers at AV-TEST use their own test software. Sunshine, one of the software tools, analyzes what happens to the test computer’s (loaded with the AV application being tested) file system, registry, internal processes, and memory. Sunshine also monitors network traffic to and from the test computer when malware is executed.

VTEST, another AV-TEST software tool, melds 40 different antivirus scanners into a cohesive unit, allowing test engineers to monitor malware activity as it executes. After which the engineers compute reaction times of the antivirus product being tested.

Test modules

With the tools in place, AV-TEST staff then look at three specific categories: Protection, Performance, and Usability. Maik Morgenstern, part of the CEO team and technical director, clarified the significance of each during an email conversation.

Protection: This category tests an antivirus application’s effectiveness against current online threats (zero-day and web/email malware). Each test computer visits 150 to 200 known malicious websites, and the protection software tool checks if the AV product can detect and block any attack. The test computer is also subjected to malicious files and watched to see if the AV software detects known threats embedded in the files.

The significance of visiting 150-200 websites for protection testing was unclear. How are the websites chosen? Morgenstern said, ” AV-TEST systems crawl the Web looking for potential malicious content every day, analyzing tens of thousands of websites. If a harmful website is discovered, it is added to the test regimen.”

Morgenstern also said that all computers loaded with an AV product being tested are subjected to the malicious URLs simultaneously to ensure each AV product faces the same parameters. After which the testers:

- Check if the malicious URL is blocked.

- If not blocked, the malicious content is allowed to download.

- If the download is not blocked, the malicious content is allowed to execute.

- If the malware is then detected, that fact is recorded; if not detected, the tester waits two more minutes to see if the AV product locates the malware.

- After the online portion is completed, the computer’s system-state is checked to see if the malware was successful in altering any of the system files.

Performance: This testing measures the impact an antivirus package has on the speed of the computer. Five scenarios are covered: downloading files, visiting websites, installing applications, using applications, and copying files. The results are compared to a clean computer without AV software installed. The test is repeated multiple times to eliminate outliers, and allow AV-TEST engineers to calculate a stable average.

Usability: An AV program has to instill trust by not issuing false positives. Throughout testing an AV package, the test engineers track how many false detections are generated, and how they might affect users. The following test parameters are used:

● AV-loaded test computers visit 500 websites known to be benign; any false positives are noted.

● AV-loaded test computers scan thousands of files (also benign) looking for false detections.

● Forty popular and malware-free applications are installed on each computer with engineers noting whether the AV application considered the newly-installed program a threat.

That is how each antivirus program is tested. Now let’s see what results the team at AV-TEST obtained.

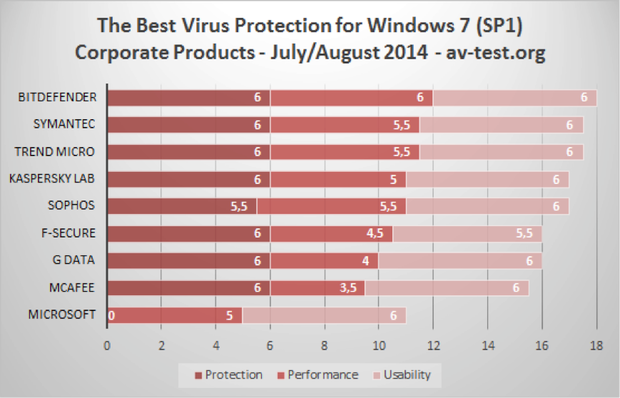

Image: AV-TEST

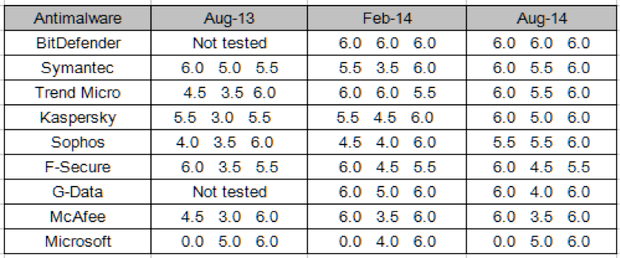

The good news is obvious, and so is the bad news about Microsoft. For specific test results and more detailed explanations please refer to the online test results for each product. The next slide is a comparison of the current test results with two earlier reports, showing whether an application improved or not. The test scores (0.0 to 6.0) in each of the categories are arranged in the following order: Protection, Performance, Usability.

Image: AV-TEST

Morgenstern’s comment about the results is encouraging, “Overall, we are seeing a positive trend in the malware-detection results.” He said, “Only the Microsoft products are falling behind with 20 percent smaller detection rates than the average.”

Morgenstern also said, “Please note the percentages of the results are mathematically rounded. All test criteria were developed in close cooperation with the developers and users of these tools. Vendors could cross-check the results.”

Via: techrepublic

Leave a Reply